About

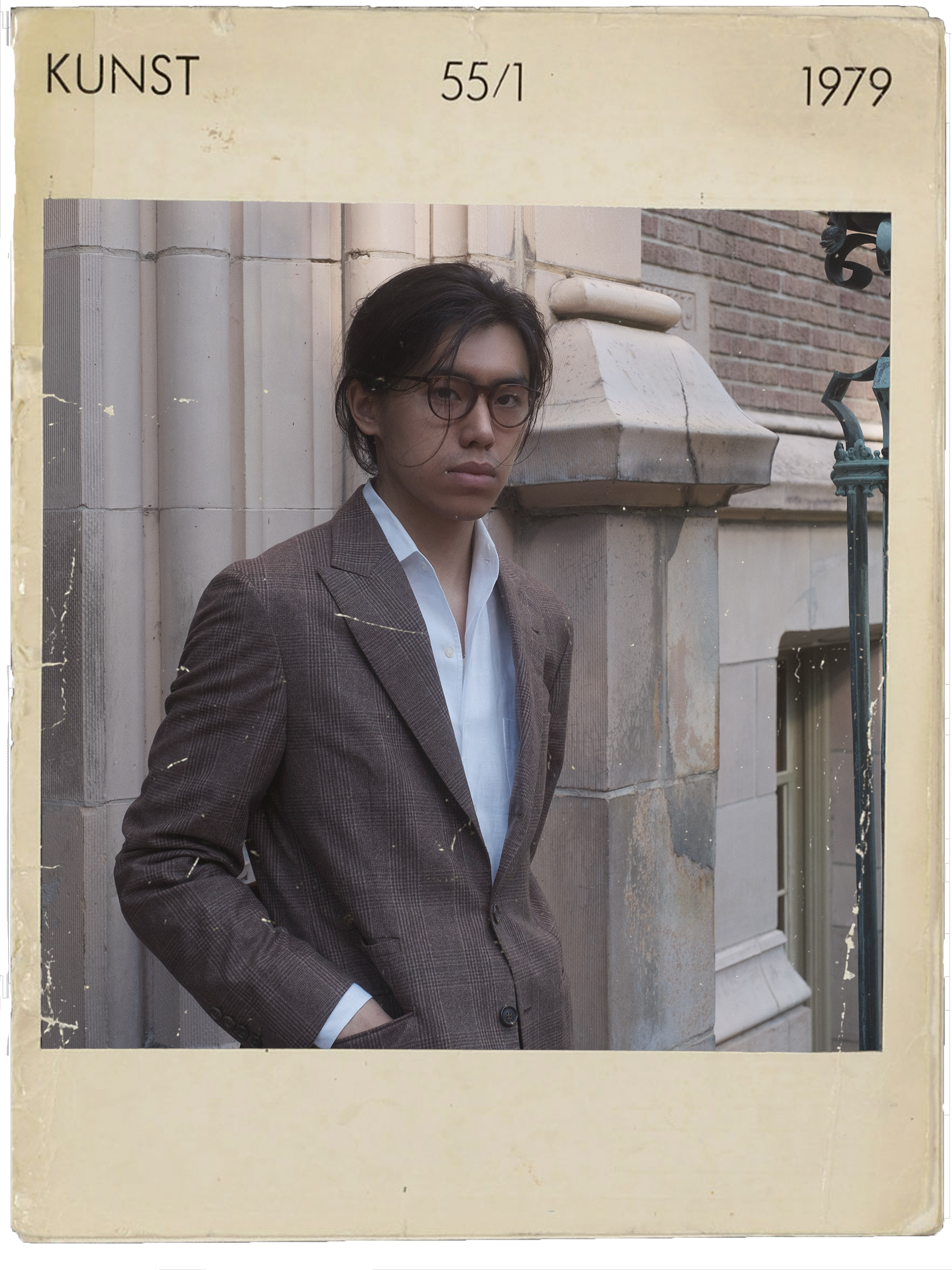

Hello, I’m Junjie Oscar Yin#

My research is in natural language processing and machine learning.

I am a first-year PhD student at UW NLP, advised by Hannaneh Hajishirzi.

Previously, I was fortunate to collaborate with Sasha Rush.

In my undergraduate years at Johns Hopkins, I was grateful to be advised by Alan Yuille and supported by the Westgate Scholarship. During my studies, I spent a year at the University of Oxford, where I had the privilege of being mentored by Michael Wooldridge.

Publications#

My list of publications is available here:

Learning to Detect Language Model Training Data via Active Reconstruction.

Junjie Oscar Yin, John X. Morris, Vitaly Shmatikov, Sewon Min, Hannaneh Hajishirzi.

Preprint

[Paper] [Codebase]Approximating Language Model Training Data from Weights.

John X. Morris, Junjie Oscar Yin, Woojeong Kim, Vitaly Shmatikov, Alexander M. Rush.

Conference on Language Modeling (COLM), 2025.

[Paper] [Codebase]Compute-Constrained Data Selection.

Junjie Oscar Yin, Alexander M. Rush.

International Conference on Learning Representations (ICLR), 2025.

[Paper] [Codebase] [Talk]ModuLoRA: Finetuning 2-Bit LLMs on Consumer GPUs by Integrating with Modular Quantizers.

Junjie Oscar Yin, Jiahao Dong, Yingheng Wang, Christopher De Sa, Volodymyr Kuleshov.

Transactions on Machine Learning Research (TMLR), 2024. (Featured Paper ; Presented at ICLR 2024)

[Paper] [Codebase] [Blog Post]

Recent Updates#

Below you will find the most recent updates:

- 2024 Computing Research Association’s (CRA) Outstanding Undergraduate Researcher Award (URA). Dec 2023.